Artificial Intelligence (AI) has revolutionized software development, offering developers tools that can generate code, automate testing, and boost productivity. With platforms like GitHub Copilot and ChatGPT, programmers can now build applications faster than ever before. However, this progress comes with unintended consequences.

One growing concern is AI code hallucination—a phenomenon where AI models generate code that seems correct but is flawed, insecure, or entirely fabricated. These “hallucinations” can introduce vulnerabilities into software systems, making them susceptible to attacks. As reliance on AI-generated code grows, so does the risk of these silent flaws being deployed in production environments.

What Are AI Code Hallucinations

AI code hallucinations refer to instances where generative AI models produce code that appears syntactically or logically correct but contains hidden flaws. These errors often arise from the AI’s misunderstanding of context, outdated training data, or an inability to reason like a human developer. While helpful in speeding up development, hallucinated code can introduce subtle bugs or security loopholes.

Why AI Code Hallucinations Are Dangerous

These hallucinations are more than just programming quirks. In critical systems, flawed AI-generated code can lead to data breaches, privilege escalation, or system crashes. Attackers can exploit these weaknesses, especially if developers trust AI-generated outputs without rigorous validation. The false sense of security surrounding AI-generated code magnifies the problem.

Real-World Examples of AI-Generated Vulnerabilities

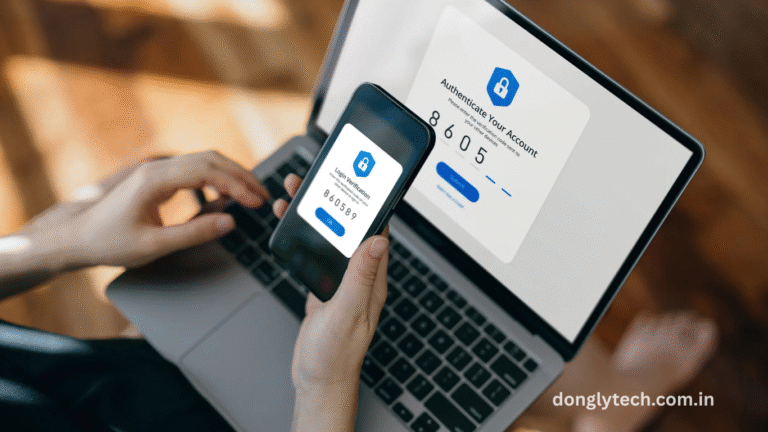

Security researchers have documented cases where AI-generated code introduced SQL injection vulnerabilities, weak cryptographic implementations, and improper authentication mechanisms. For example, some AI tools have recommended outdated or deprecated functions, which hackers can easily exploit. These examples underscore the need for scrutiny when using AI in security-sensitive applications.

Read More : Millions of Apple Airplay-Enabled Devices Can Be Hacked via Wi-Fi

How Developers Can Spot and Fix Hallucinated Code

Developers must take a proactive role in auditing AI-generated code. Cross-checking code against official documentation, using static code analysis tools, and performing security reviews are critical. Encouraging peer code reviews and integrating unit tests can help catch errors early. Rather than treating AI output as infallible, developers should approach it with cautious skepticism.

Role of Training Data in Code Hallucinations

The quality and diversity of training data directly influence an AI model’s output. If models are trained on flawed or outdated codebases, they may replicate or even amplify these errors. Moreover, public code repositories often contain insecure or poorly documented examples, which can skew AI behavior. Ensuring clean and secure training data is essential to reducing hallucinations.

AI Code Hallucinations in Open Source and Enterprise Environments

In open-source communities, developers may unknowingly contribute AI-generated code with hidden flaws, spreading vulnerabilities across shared projects. In enterprise environments, the risks multiply due to the scale and sensitivity of the data involved. AI code hallucinations can silently infiltrate mission-critical systems if not properly vetted, leading to costly security incidents.

Regulatory and Ethical Implications

The rise of AI code hallucinations raises questions about liability and accountability. If a security breach occurs due to flawed AI-generated code, who is responsible—the developer, the AI provider, or the organization? Regulatory bodies are beginning to explore guidelines for the safe use of AI in software development. Ethical standards must evolve alongside technology to address these challenges.

Impact on Developer Trust and Productivity

While AI promises to streamline coding, repeated exposure to hallucinated outputs can erode developer trust. Teams may spend more time debugging and verifying AI-generated code, offsetting productivity gains. The psychological impact of second-guessing every suggestion can also lead to fatigue and decision paralysis. Balancing trust and caution is key.

Frequently Asked Questions

What is a code hallucination in AI?

A code hallucination occurs when AI generates code that appears correct but is logically flawed, insecure, or incorrect due to misunderstanding the context or purpose.

Why do AI models hallucinate code?

AI models hallucinate code because they rely on probability and pattern matching rather than understanding. Limited training data and ambiguous prompts also contribute to this issue.

Can AI-generated code be trusted in production?

While AI can assist development, its code should not be trusted blindly in production. All AI-generated code should undergo human review and testing.

Are AI code hallucinations a security threat?

Yes, hallucinated code can introduce critical vulnerabilities, especially in authentication, data handling, and encryption functions, posing serious security threats.

How can developers identify hallucinated code?

Developers can identify hallucinated code by conducting manual reviews, using static analysis tools, checking against documentation, and running comprehensive tests.

What tools can detect insecure AI-generated code?

Tools like SonarQube, ESLint, and Checkmarx can scan AI-generated code for potential vulnerabilities and compliance issues.

Should AI be banned from writing security-critical code?

Rather than banning AI, developers should use it with caution and ensure that any AI-generated code is vetted through secure development practices.

How can organizations reduce the risks of AI hallucinations?

Organizations can reduce risks by training developers, enforcing code reviews, using secure coding standards, and deploying AI responsibly.

Conclusion

AI code hallucinations pose a serious challenge in modern software development, particularly in cybersecurity. As AI tools become more integrated into coding workflows, vigilance, proper vetting, and ethical practices are vital to minimize risk. Developers and organizations must strike a balance between innovation and safety, ensuring AI remains a helpful ally rather than a hidden liability. Constantly audit before you trust.